PyReason

PyReason is a powerful Python-based temporal first-order logic explainable AI system supporting multi-step inference, uncertainty, open-world reasoning, and graph-based syntax.

Read the latest PyReason docs here: https://pyreason.readthedocs.io/en/latest

PyReason-as-a-Sim now available that leverages PyReason as a simulator in a reinforcement learning framework.

Preprint: https://arxiv.org/abs/2310.06835

Code for PyReason-as-a-Sim (integration with DQN): https://github.com/lab-v2/pyreason-rl-sim

Code for PyReason Gym: https://github.com/lab-v2/pyreason-gym

• Supports generalized annotated logic with temporal, graphical and uncertainty extensions, capturing a wide variety of fuzzy, real-valued, interval, and temporal logics

• Modern Python-based system supporting reasoning on graph-based data structures (e.g., exported from Neo4j, GraphML, etc.)

• Rule-based reasoning in a manner that support uncertainty, open-world reasoning, non-ground rules, quantification, etc., agnostic to selection of t-norm, etc.

• Fast, highly optimized, correct fixpoint-based deduction allows for explainable AI reasoning, scales to graphs with over 30 million edges

Read the PyReason paper (w. supplement):

https://arxiv.org/pdf/2302.13482.pdf

Introductory blog post:

https://medium.com/towards-nesy/pyreason-software-for-open-world-temporal-logic-d67de751830e

PyReason docs: https://pyreason.readthedocs.io/en/latest

Open source Python library is available at: pypi.org/project/pyreason

PyReason codebase can be found at:

github.com/lab-v2/pyreason

Install with pip:

pip install pyreason

Videos:

Introduction: https://youtu.be/E1PSl3KQCmo

Technical talk: https://youtu.be/G4-jcb2ktKg

Slides:

Introduction to PyReason v 1.1

Bibtex citation:

@inproceedings{aditya_pyreason_2023,

title = {{PyReason}: Software for Open World Temporal Logic},

booktitle = {{AAAI} Spring Symposium},

author = {Aditya, Dyuman and Mukherji, Kaustuv and Balasubramanian, Srikar and Chaudhary, Abhiraj and Shakarian, Paulo},

year = {2023} }

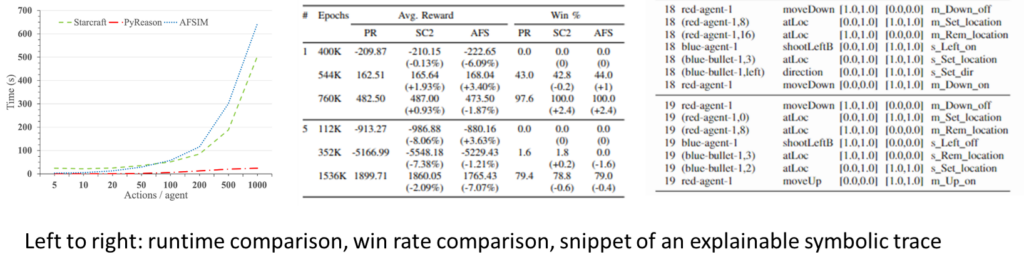

PyReason-as-a-Sim for Deep Reinforcement Learning

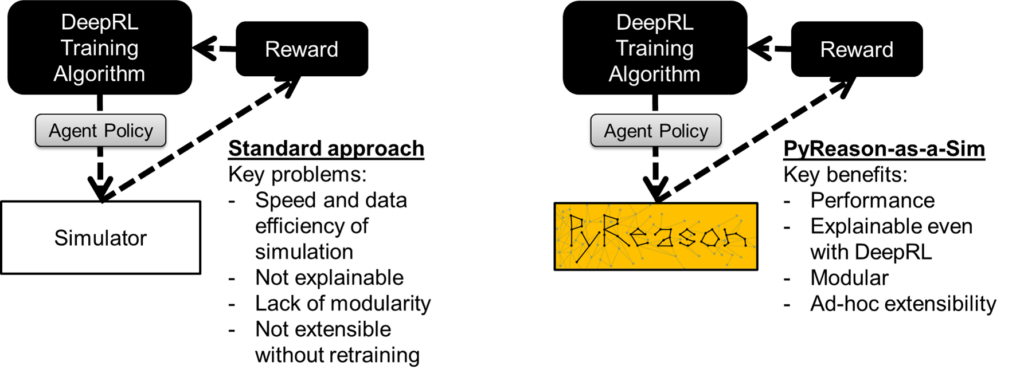

PyReason can function as a semantic proxy for simulation in a reinforcement learning (RL) framework. We showed that inference with PyReason logic program can provide a three order-of-magnitude speedup when compared with native simulations (we studied AFSIM and Starcraft2) while providing comparable reward and win rate (we found that PyReason-trained agents actually performed better than expected in both AFSIM and Starcraft2). However, the benefits of our semantic proxy go well beyond performance. The use of temporal logic programming has two crucial beneficial by-products such as symbolic explainability and modularity. PyReason provides an explainable symbolic trace that captures the evolution of the environment in a precise manner while modularity allows us to add or remove aspects of the logic program – allowing for adjustments to the simulation based on a library of behaviors. PyReason is well-suited to model simulated environments for other reasons – namely the ability to directly capture non-Markovian relationships and the open-world nature (defaults are “uncertain” instead of true or false). We have demonstrated that agents can be trained using standard RL techniques such as DQN using this framework

Preprint: https://arxiv.org/abs/2310.06835

Video: https://youtu.be/9e6ZHJEJzgw

Code for PyReason-as-a-Sim (integration with DQN): https://github.com/lab-v2/pyreason-rl-sim

Code for PyReason Gym: https://github.com/lab-v2/pyreason-gym